Darran Rolls, Identity Innovation Labs

Last month I missed attending the Science of Consciousness conference at the University of Phoenix in Arizona [1]. The event has long been a critical nexus in the ongoing research into the nature of human consciousness and the theory of mind. Sadly, the event was cancelled this year due to the emergence of a historical “funding connection” with the Epstein estate – make of that what you will. Missing the event was acceptable under the circumstances, but none the less a shame, as I was very much looking forward to meeting the leading minds in cognitive neuroscience and furthering my own research into how the human brain makes decisions, understands context and delivers subjective experience or “qualia”.

I “double clicked” into this topic in early 2025 while trying to answer the question “is AI conscious?” Anyone chatting with their favorite foundation model eventually asks the question “are you alive?”. LLM’s today are undoubtably “thinking machines” but are they sentient, do they have subjective experience, a sense of qualia? Anyone that reads science fiction or has watched the Bladerunner movies, must of asked themselves this question and concluded “if not now, then when…”

After 1000’s of hours of reading and research into this topic, I’ll withhold my personal conclusion on the “mind of the machine” for a later post and instead will focus today on what the leading research into human consciousness can tell us about the hot topic of agentic governance. Spoiler alert: the topics as so well aligned, it’s a bit spooky.

Learning From The The Hard Problem

Take just a short dive into David Chalmers “hard problem of consciousness” [2] and you’ll soon find a major correlation between how human consciousness works and how we might solve the hard problem of agentic access governance.

Reading (and trying to understand) the leading consciousness research from Chalmers and the likes of Bernard Baars and his Global Workspace Theory [3] or key texts from folks like Daniel Friedman and his Active Inference Model [4] – it’s no light reading or simple topic. But if you do, you quite quickly feel the synergy and alignment with the emerging field of agentic access controls and governance. How does one minimize risk and “surprises”, whilst maximizing the avoidance of entropy [5]? Over the coming weeks I’ll be diving into this topic a lot more, but for today, I want to scan over a couple of the theoretical underpinnings of this work and relate it back to a real world of exactly “how” to turn a non-deterministic agentic access model into something a business owner can understand and build into a corporate risk and compliance framework.

“Understanding the long-tail of intent-based access and dealing with the reality of non-deterministic chains of trust requires minimizing governance surprises“

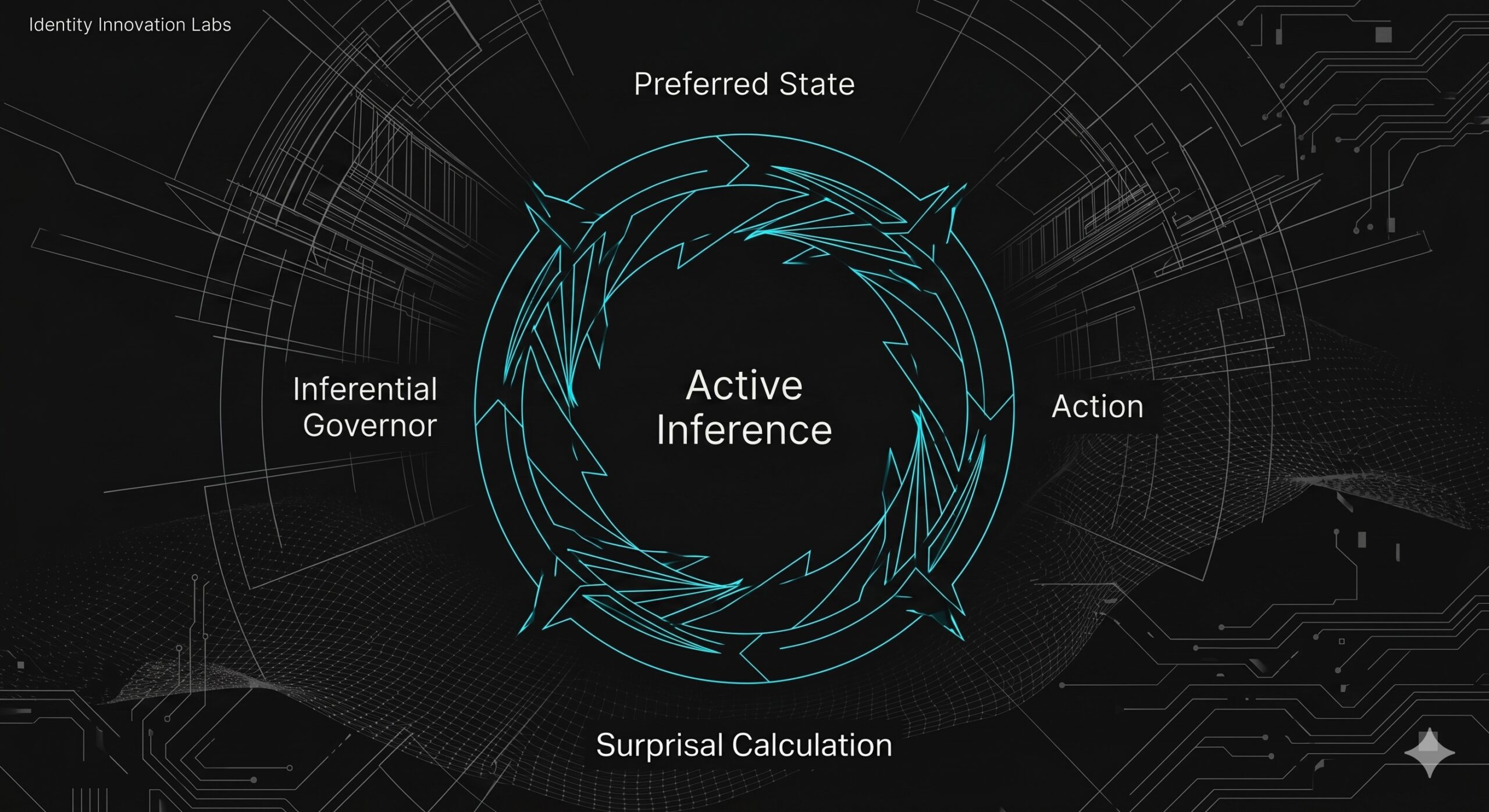

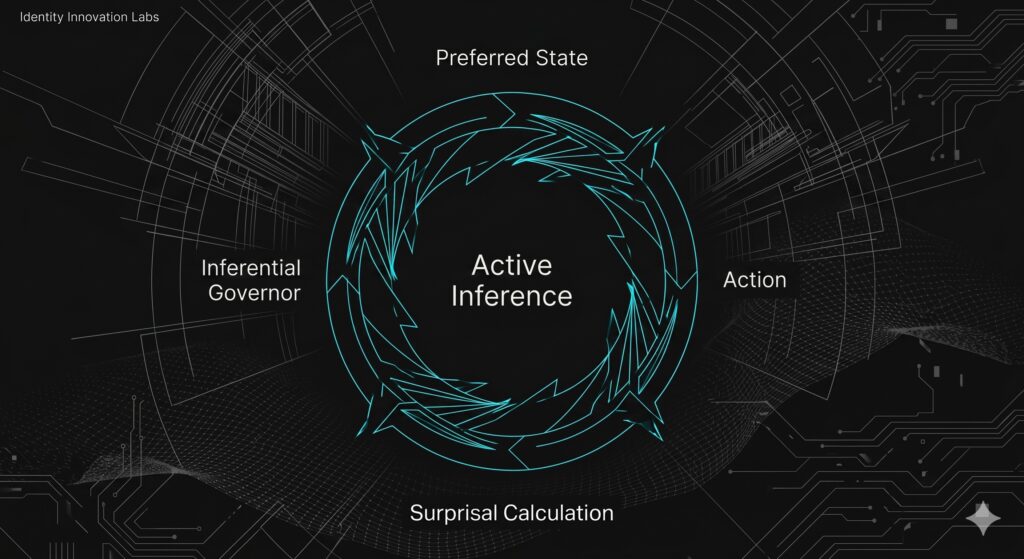

In extreme simplification, Friedman’s unified Active Inference model says that any self-organizing system (from a biological cell to a multi-agent AI ecosystem) survives by maintaining “homeostasis” – a predictable and desirable state of being – using a “generative conscious model” of its world and by predicting the path of least “surprise” or error [6]. In Friedman’s framework, an agent wouldn’t just process data, it would actively predict it. The agent itself would exists in a perpetual loop, forever striving to minimize the potential for entropy while maximize its goal alignment. Does that sound familiar? Understanding the long-tail of intent-based access and dealing with the reality of non-deterministic chains of trust requires minimizing governance surprises too. When an agent encounters a situation that its authorization model didn’t predict, or it performs an action that results in an unexpected state or access path, governance risk increases exponentially. To survive and maintain coherence, the agent must account for the revised risk it is generating. Current research points towards several models for reducing that risk in IAM, ranging from enhanced signaling over something like the Shared Signals Framework [7], to dynamically introducing new control flows such as “Human In The Loop” (HITL) flows that bring things back into line with risk & control expectations.

Generative Governance – The continuous Loop

Delivering Active Inference for agentic access governance likely means giving up on things like deterministic role memberships for NHI and instead focusing on “intent-aligned risk assessment” with dynamic controls close to the decision point, the application data source or the tool usage. It’s not that the old work of static access governance has gone away (we still run mainframes and Salesforce right) it’s more that we shouldn’t try to retrofit its access control and compliance frameworks to deal with the “surprises” that will come from an agent model capable of emergent behavioral risk.

“Building a new agentic identity fabric that delivers the required levels of assurance will be of critical importance to the future of autonomous AI systems”

In my view, we should be looking to augment our existing control frameworks to allow agentic systems to adopt a “generative governance approach” that reads a lot like a cross between the Bayesian risk in Global Workspace Theory and Friedman’s Active Inference model. Instead of being a wall of assigned and provisioned access entitlements, governance becomes a dynamic risk model that performs “late-bound control overlay functions” in response to changes in agent trust or authorization behavior. In summary this could look a lot more like the following:

- Establishing Policy (Preferred State): Just as a biological agent has a “preferred” body temperature, a governance model for agentic has “preferred” security states e.g., “Tokens should only be delegated across specific hops with specific controls” or when a non-deterministic finance agent makes an unusual trade, it does so with enhanced verification and “overlayed” controls and governance.

- Calculating Risk (Surprisal Avoidance): When an agent requests access to a tool via a pre-defined access path, just like in Friedmans Free Energy Principle the governance layer treats this as a “sensory input.” It calculates the gap between the user’s intent, the agent’s request and the preferred security state to make a risk-based access control decision.

- The Dynamic Update (The Pause): If the request is “surprising” (high risk), the governance layer doesn’t just fail the request. It initiates a “Dynamic Pause” to gather more evidence – perhaps requesting a new Just-In-Time (JIT) tokens, a biometric approval from a human principal, or a more granular intent verification, to minimize the free energy of the transaction before it is committed.

- Expectation Update (Closing the Loop): When emergent behavior has been detected, the overall system “learns” from the results of the last execution and retrospectively kicks off an out of band compliance and risk assessment processes that review results and potentially updates authorization policies.

Real World Generative Governance Controls

There’s so much to be learnt from the leading theories of consciousness that I’ll be coming back here in future posts. But for now, let’s conclude this introduction to the topic with a concrete scenario. Imagine if you will a multi-agent system where a “Planning Agent” delegates a task to a “Financial Agent” to execute a wire transfer. In a traditional model, you’d have a static rule: “Financial Agent can access the Wire Tool.” This provides a horribly “lumpy” and likely unmanageable access entitlement risk profile for a non-deterministic system. So, in an Active Inference inspired governance model, the identity control plane embedded in the infrastructure, acts as the inferential governor.

- Task Verification: It evaluates if the current “intent” of the Planning Agent aligns with the “preferred state” of the organization under more static historical process flows.

- Delegation Audit: It tracks the “surprisal” of the delegation chain. Has the token been passed through an untrusted or hereto unseen hop?

- Deterministic Enforcement: If the Bayesian risk (surprisal) exceeds a specific threshold, the governance layer intervenes placing enhanced controls – maybe a blocking function close to an application of tools access source. Or in the use case described above, it initiates a HITL interaction – aka a real-time re-approval back to the top of the chain of trust.

- Policy Update: The policy system overseeing the net risk and resulting business impact of the action updates its expectations to require a secondary JIT token from the top of the chain and creates a “policy violation” that must be accepted by the business process owner before the next execution of the model is allowed to begin.

In the new world of agentic access, governance must be as dynamic as the systems it protects. By learning from Friedman’s approach to Active Inference, we can create a single governance envelope for the emerging world of Agentic Access Governance. To be clear, this isn’t just about security, it’s about providing a framework for robust, reliable, and predictable audit behavior that helps meet compliance behavior in systems that are by their very nature, non-deterministic and very hard to pre-approve.

How we go about building a new “agentic identity fabric” that delivers the required levels of assurance will be of critical importance to the future of autonomous AI systems. Simply restricting agents to static policies will not only hinder its growth, but it will undoubtably lead to the next tidal wave of vulnerability and exploitation. So if you’re interested in solving “the hard problem of agentic access governance” or will be at the Kuppinger Cole EIC event May 19-23rd in Berlin later this month, shoot me a note or come find me at the event and let’s talk more about the intersection of neuroscience and IAM – two topics more aligned than you might think.

References

[1] The Science of Consciousness Conference, https://cs2026.org/about.html

[2] Chalmers, David (1995). “Facing up to the problem of consciousness” (PDF). Journal of Consciousness Studies. 2 (3): 200–219.

[3] Baars, B. J. (1988). A Cognitive Theory of Consciousness. Cambridge University Press

[4] Friston, K., Friedman, D. A., et al. (2023). A Variational Synthesis of Evolutionary and Developmental Dynamics. Entropy, 25(7), 964.

[5] Friedman, D. A., et al. (2022). Active Inference for Integrated Cognitive Architectures. Biological Cybernetics.

[6] Sajid, N., Ball, P. J., Parr, T., & Friston, K. J. (2021). Active Inference: Demystified and compared. Neural Computation, 33(3), 674–712.

[7] The Shared Signals Framework, Ansell, J., & Cappalli, T. (2024). Shared Signals and Events (SSE) Framework. IETF Draft.